Nino Ilveskero, Myyntijohtaja | Kaupallinen johtaja

31.1.2023 10.15

Using ChatGPT and other generative AI in expert work – while putting responsibility first!

- AI

- Data governance

In past few weeks, there has been a lot of talk about the effects and use cases of Open AI organization’s ChatGPT program. Social media has overflowed with views and experiences, and for a good reason: ChatGPT is a phenomenon. In the beginning of 2022, generative AI was thought to become a more popular technology trend within 2–5 years, and in November 2022,a trial version was made widely available.

We gave Loihde’s AI experts a task to talk to ChatGPT about our future themes. It was a Friday afternoon in December, but the team was working enthusiastically, as they were excited to start working on the results with ChatGPT. At the end of the day, we asked ChatGPT to summarize the afternoon for an internal newsletter.

Using the same methods, any organization can start utilizing AI straight away, even if no internal decisions on the use of AI has been made or no AI systems have been acquired. AI will become a part of the everyday life of people and organizations in the same way that the search engine Google did back in the day. As Google makes us a list of the links in relation to our search, ChatGPT acquires all the information contained by those links in the form of an answer. And there is more – ChatGPT has been able to directly provide input, on software development, for instance. It is evident that this year more focus and resources will be directed towards the possibilities of ChatGPT and the utilization of those possibilities in organizational operations at all levels.

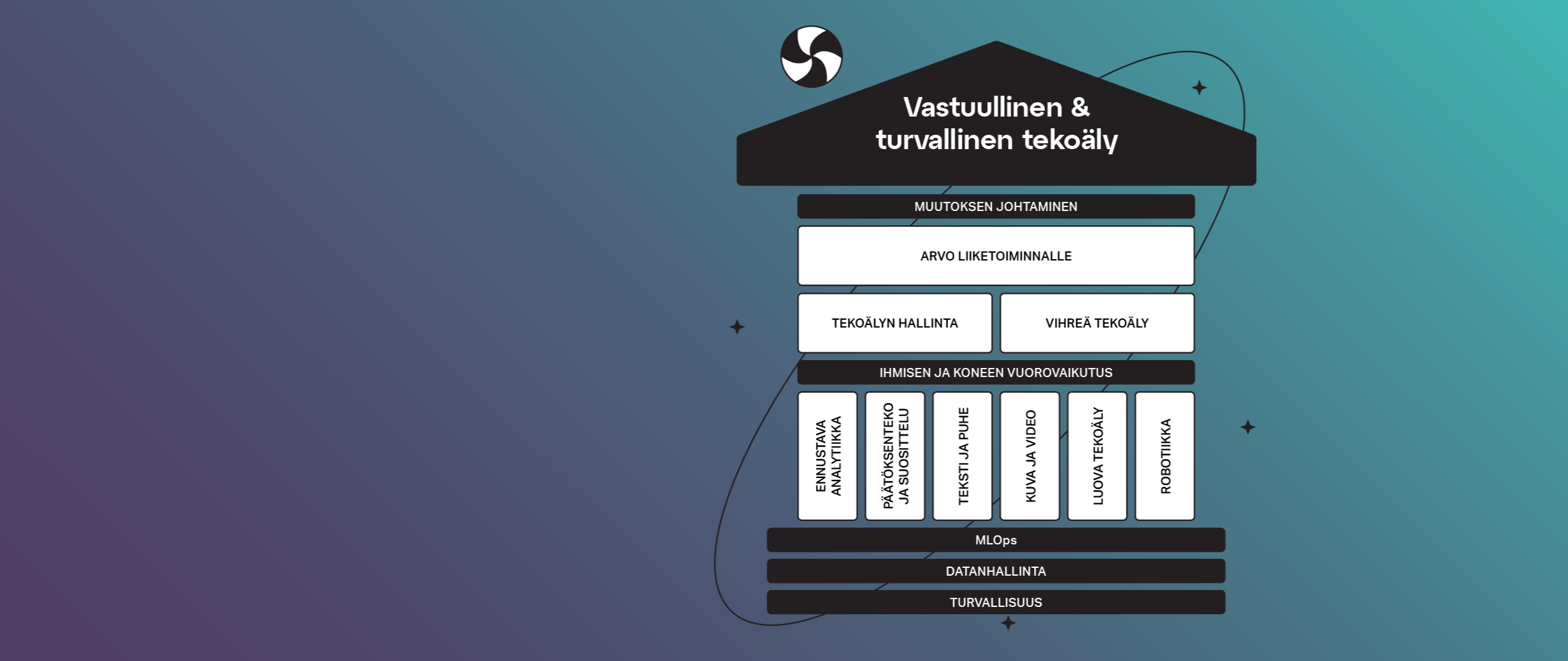

Therefore, we consider it important that all organizations create an AI governance model for their use when they begin experimenting with AI. Loihde’s AI governance model, which is part of Loihde’s working methods, lines up with other governance models and instructions. For us, AI governance means that us, employees, work responsibly. In the context of AI, responsibility primarily means that AI is developed and used in a controlled and legally compliant manner, and also that it is utilized in building a better future. Responsibility is supported, for example, by the fact that an organization has predetermined responsibilities, processes, and tools for the development, use, and monitoring of artificial intelligence.

What does ChatGPT have to do with the AI governance model?

For us, Open AI’s ChatGPT, or more generally, all open access AI solutions, are one of four cases in the AI governance system:

- Open access AI, such as ChatGPT

- Applications using AI purchased from a third party

- In-house AI research and development

- Production of AI solutions for our customers and consultation

The EU Artificial Intelligence Act (EU AI Act)* is expected to be approved in 2023. This Act is also going to introduce a common regulatory framework for general purpose AI systems. Therefore, the Act will set requirements for organizations utilizing such systems. It is also possible that generative AI may even add some spice to this development on a very short schedule.

How is Loihde going to use ChatGPT and similar solutions?

Here at Loihde, we use the latest technology responsibly. Our operational methods include source criticism and fact-checking, as well as ensuring that new technologies are safe and reliable and that our customers benefit from their use. We want to challenge ourselves in creating new innovative solutions, which is why we will also experiment with different uses for ChatGPT.

Loihde’s ethical guidelines reflect our approved practices and our commitment to complying with laws and ethically sustainable principles. Each Loihde employee is responsible for ensuring that ethical principles are implemented in our business operations and that our working culture emphasizes respect towards others.

Loihde’s guidelines and practices for good governance create a foundation for responsible and safe AI governance.

We use the following practices in the use of generative AI (e.g., ChatGPT):

- We perform active research and experiments on the possibilities of using generative AI in strengthening expert work.

- We are transparent with our customers. If the product is partly generated by generative AI (e.g., code, images, text, or tables), we will disclose it.

- We are responsible for making sure that the use of AI solutions does not jeopardize our data security and data protection or that of our customers.

- The responsibility of the end result always and unequivocally lies with a Loihde employee (i.e., a real person). The user must take the restrictions of the tool or model into account.

- We are responsible for ensuring that the materials or other products produced by us do not infringe the intellectual property and/or other rights of third parties.

- We are aware of the need for the computing power of the solutions and the impact on energy consumption, and we use the solutions responsibly.

- We ensure that our experts are familiar with the AI governance system and the practices of responsible and safe AI utilization.

With enthusiasm and following these principles, we set out to see what the year 2023 will bring us in the field of AI, and especially generative AI. We also looks forward to seeing the development in introducing governance models that promote responsible and safe utilization of AI!

*The European Council adopted its common position on the EU AI Act (draft) on December 6, 2022. The Council can start negotiations with the European Parliament after the Parliament has confirmed its position. Regulation of general purpose AI systems has been added to the proposal. General purpose AI systems are used for many different purposes, including image and sound recognition, production, and translation of languages.

Ota yhteyttä

PUHELIMITSE, SÄHKÖPOSTILLA TAI LOMAKKEELLA.